We are Cardano

We are Cardano

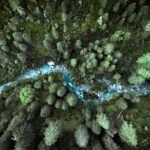

Our world deserves better financial solutions – that are more resilient and sustainable. That demands we do things differently.

At Cardano, we bring a distinct approach to advisory and investment management that challenges the status quo.

Interested in what we offer?

We help pension schemes make and implement their most important decisions about long-term objectives, investment strategy and risk management. Circumstances vary from client to client, but our overarching belief is that people deserve a different and more resilient pension solution. That means we strive to achieve acceptable outcomes – for Trustees, members and corporate sponsors – no matter what happens to economies and markets.

We are Cardano

Learn more about how our advisory and investment businesses have come together to deliver better pension solutions for everyone.